AI Edge Gallery

AI Edge Gallery is built for people who want to try on-device AI on Android without relying on cloud processing for every task. It suits users exploring local models, prompt experiments, and privacy-focused mobile workflows.

Some people look for this app because they want AI features that can keep working on supported hardware even when they are away from a steady connection. Others want a simpler way to test mobile AI use cases from one place.

The app fits a growing interest in local AI tools on phones, especially for chat, image understanding, audio tasks, and model testing. It also appeals to users who want to download the APK directly from our website for easier access.

App Information

What Is AI Edge Gallery?

AI Edge Gallery is an Android app associated with Google AI Edge materials and public project references around local AI experiences. It is presented as a way to run supported generative AI models directly on a mobile device instead of routing every task through a remote service. That changes how users think about speed, privacy, and offline access in everyday use.

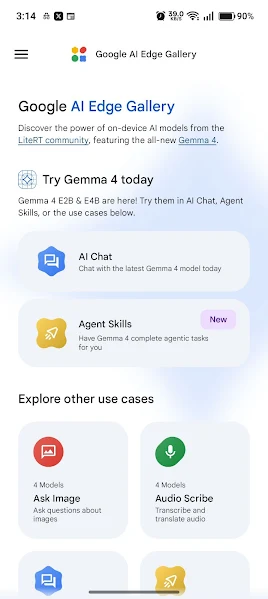

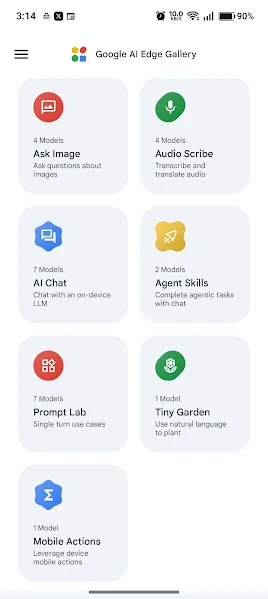

Public descriptions connect the app with multiple practical AI tasks rather than one narrow feature. Users may see chat-style interaction, image-based questions, prompt exploration, and audio-related capabilities discussed around the app. This broader positioning makes it feel more like a mobile AI sandbox than a single-purpose assistant.

The app also sits inside a wider edge AI context where lightweight runtimes, model downloads, and hardware-aware performance matter. That is important because on-device AI depends not only on the interface but also on the phone, storage space, memory behavior, and model choice. For many users, the app becomes a hands-on way to understand what local AI can really do on Android.

AI Edge Gallery matters because it helps bridge curiosity and real use. Some people want to test privacy-first AI workflows, some want to compare models, and some simply want a mobile tool for local experimentation. The app gives those users a more concrete path to explore AI without making every interaction depend on a cloud-only setup.

Why People Download the App

Many users search for AI Edge Gallery because they want to try local AI on a phone in a more direct way. Instead of reading about on-device models in technical posts, they want an app that lets them interact with the experience themselves. That practical entry point makes the app appealing to both curious users and developers.

Another reason people download it is convenience. A single app that brings together chat, image questioning, audio-related tools, and prompt testing feels easier than assembling multiple demos or separate utilities. Users who want one place to explore mobile AI behavior often prefer that kind of bundled experience.

Privacy also plays a major role in download intent. People increasingly care about when a task stays on their device and when data needs to leave it. An app centered on local execution naturally attracts users who want more control over sensitive prompts, personal notes, images, or spoken input.

The app can also solve a learning problem. On-device AI is often discussed in abstract terms, but many users need something they can install and test. AI Edge Gallery gives them a concrete environment where they can see how local models behave, where limitations appear, and what kinds of tasks work best on their own hardware.

Main Features

On-Device AI Chat

AI Edge Gallery can provide a chat-style interface for interacting with supported local models on Android. This helps users test everyday prompting, follow-up questions, and practical mobile conversations without depending entirely on remote processing.

Image Questioning and Visual Input

The app is publicly associated with asking questions about images and working with multimodal model behavior. That gives users a way to see how local AI can interpret visual input, captions, or image-based context from a phone workflow.

Audio-Related Capabilities

Public materials around the project also point to audio understanding and transcription-style use cases. This expands the app beyond simple text prompts and shows how local AI can support spoken or recorded input on supported devices.

Prompt Exploration

AI Edge Gallery is useful for trying structured prompts, testing wording changes, and comparing outputs across different tasks. That makes it practical for people who want to learn how prompt design changes results when the model runs on mobile hardware.

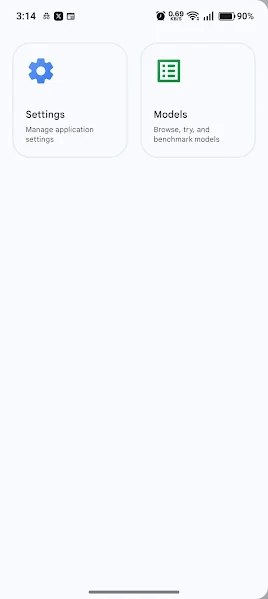

Model Discovery and Management

The app is connected with the idea of browsing, loading, and trying different supported models. This matters because local AI performance can change significantly depending on the model size, task type, and device resources available.

Offline-Oriented Use

A major attraction of the app is that key experiences are framed around local operation on the device. For users who care about privacy or unreliable connectivity, that kind of setup can be more practical than cloud-first AI tools.

App Screenshots

Core AI Tools in AI Edge Gallery

Chat Workspace

The chat area gives users a straightforward place to test question answering, summarization, brainstorming, and prompt refinement. It is often the first part people try because it makes local AI behavior immediately visible.

Ask Image

This function focuses on visual understanding. A user can supply an image and then ask the model to identify details, explain visible elements, or describe what appears in the frame in practical language.

Audio Scribe and Audio Tasks

Audio-related functions are useful for people exploring local speech handling or transcription-style workflows. These tools can help show how mobile AI extends beyond typed text and into spoken content.

Prompt Lab

Prompt testing is important when users want repeatable experiments rather than casual one-off chats. A prompt-focused area can help compare wording, structure, and task design more clearly.

Model Controls

Model-related screens matter because on-device AI requires choices about downloads, loading, and compatibility. These controls help users understand which models they can try and how those models fit the hardware.

How It Works

AI Edge Gallery works by giving the user an interface for running supported AI models on compatible Android hardware. Instead of acting only as a front end for distant servers, it is designed around local execution for certain experiences. That means performance depends on the phone, the selected model, and the kind of task being attempted.

In practice, the user chooses or loads a supported model, opens one of the main tools, and starts giving input. That input may be text, an image, audio, or a prepared prompt depending on the feature. The app then processes the request using local resources and returns the output through the mobile interface.

Because it is an edge AI app, the workflow has real technical boundaries. Larger models may require more patience, some devices will perform better than others, and storage matters because models can take space. Understanding those limits helps users get more realistic results from the app.

How to Use AI Edge Gallery

A typical user begins by opening the app, exploring the available model options, and choosing a feature that matches the task they want to test. Someone interested in everyday prompting may start with chat, while another user may head straight to image or audio tools. Starting with a simple task is usually the easiest way to understand the app.

After that, the main goal is to match the task to the right tool and the right expectations. Short prompts, clear instructions, and manageable inputs often produce a better first experience on mobile hardware. Users who want deeper testing can then compare results across different models or prompt styles.

The app becomes more useful when it is treated as both a practical tool and a testing space. A student might use it to summarize ideas offline, a developer might inspect how local inference feels on a real phone, and a privacy-conscious user might prefer it for sensitive inputs. That flexibility is part of the app’s appeal.

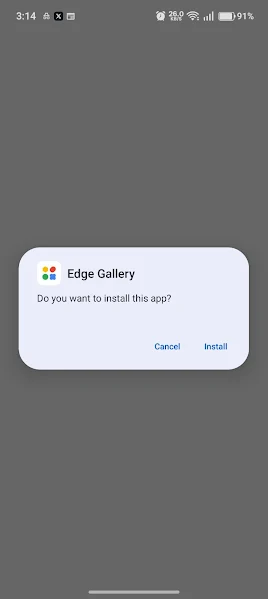

How to Download and Install the App

If you want to get started, you can download AI Edge Gallery APK directly from our website and keep everything in one place. That makes access simpler for users who prefer a direct route to the app file before beginning setup on Android.

Before installation, make sure your phone has enough free storage for both the app and any supported models you plan to try later. Local AI workflows can require more space than a lightweight standard app, so it is helpful to check storage early.

Once the APK is on your device, open it and allow installation if your Android settings ask for permission from your browser or file manager. After the app is installed, you can launch it, review available tools, and begin exploring supported models and functions.

If you plan to use image or audio features, also check relevant permissions inside Android so the app can access what it needs for those tasks. A clean installation followed by a simple first test usually gives the best introduction to how the app behaves on your phone.

- 1

Download AI Edge Gallery APK directly from our website

- 2

Open the APK file on your Android device

- 3

Allow installation permission if Android requests it

- 4

Finish the installation and launch the app

- 5

Review permissions for storage, audio, or images if needed

- 6

Load a supported model and begin testing features

Device Compatibility

Android Phones

AI Edge Gallery is mainly suited to Android phones with reasonably current software and enough local resources for model-based tasks. Better hardware generally improves responsiveness, especially when users try more demanding local AI workflows.

Android Tablets

The app can also make sense on Android tablets where extra screen space may help with prompt testing, reading outputs, and managing model options. Performance still depends on the tablet’s chipset, memory, and storage situation.

Hardware Considerations

Device compatibility is not only about whether the app installs. It also depends on how comfortably the device can handle local inference, multimodal input, and model loading. Users with older or lower-powered hardware may still explore the app, but results can vary more.

Why This App Is Useful

AI Edge Gallery is useful because it turns a technical idea into a practical mobile experience. Many people hear about edge AI, local models, or privacy-first inference, but they need a real app before those concepts become meaningful. This app gives them that hands-on starting point.

It also helps reduce the gap between experimentation and daily use. A user can test prompts, inspect how visual or audio input is handled, and learn what mobile AI can do on actual hardware. That kind of direct contact is often more informative than reading feature lists alone.

Another advantage is control. Users who care about privacy, travel often, or deal with unstable internet connections may prefer tools that are not built around constant cloud dependence. Even when local AI has limitations, the ability to process tasks on-device can still offer practical value.

The app is also useful as a learning environment. Developers, hobbyists, students, and curious users can all observe how model choice, device power, and task design shape the final result. That makes AI Edge Gallery valuable not only as an app to use, but also as an app to understand.

Pros and Cons

Pros

- •Supports hands-on local AI exploration

- •Useful mix of chat, image, and audio-oriented tasks

- •Fits privacy-focused mobile use cases

- •Helps users understand on-device model behavior

- •Can work well for practical testing on supported hardware

Cons

- •Performance can vary widely by device

- •Local models may need significant storage

- •Some tasks may feel slower than cloud tools

- •Feature availability can evolve over time

- •New users may need time to understand model choices

Frequently Asked Questions

Conclusion

AI Edge Gallery stands out as a practical way to explore on-device AI on Android through chat, image, audio, and prompt-based workflows. For users who want a direct mobile experience with local model testing, it offers a useful starting point. If you want to try it yourself, you can download the APK directly from our website and begin exploring what local AI feels like on your own device.